TLDR

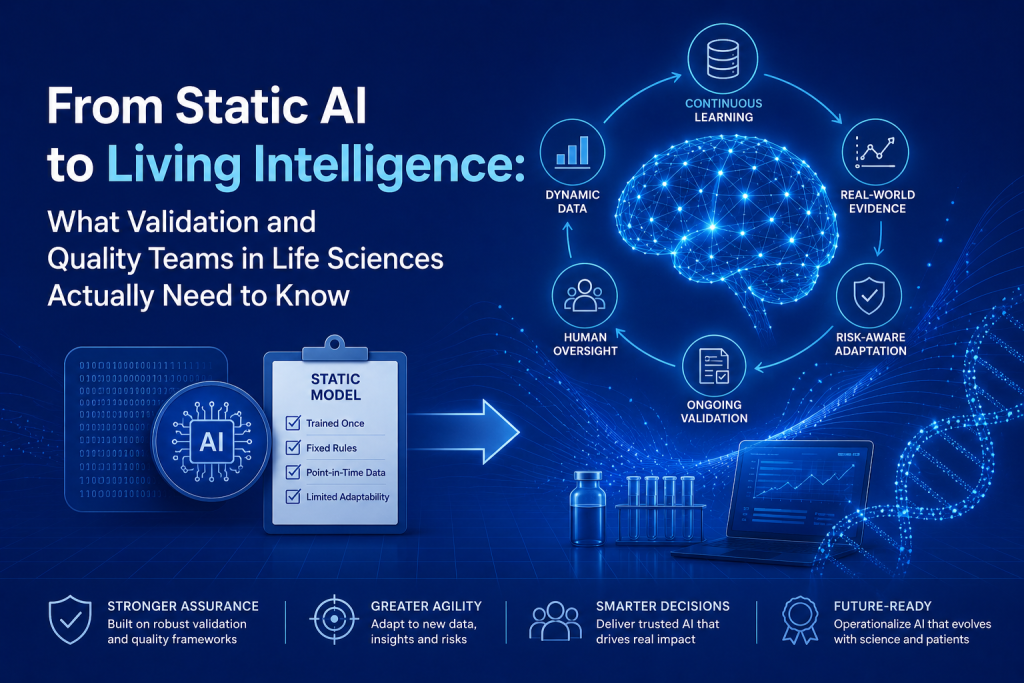

The key question for pharma and MedTech is no longer “Does this tool use AI?” but *“What Intelligence Type does it use: rules‑based, static model, governed adaptive, or ‘Living Intelligence’ . And how does that affect risk?”

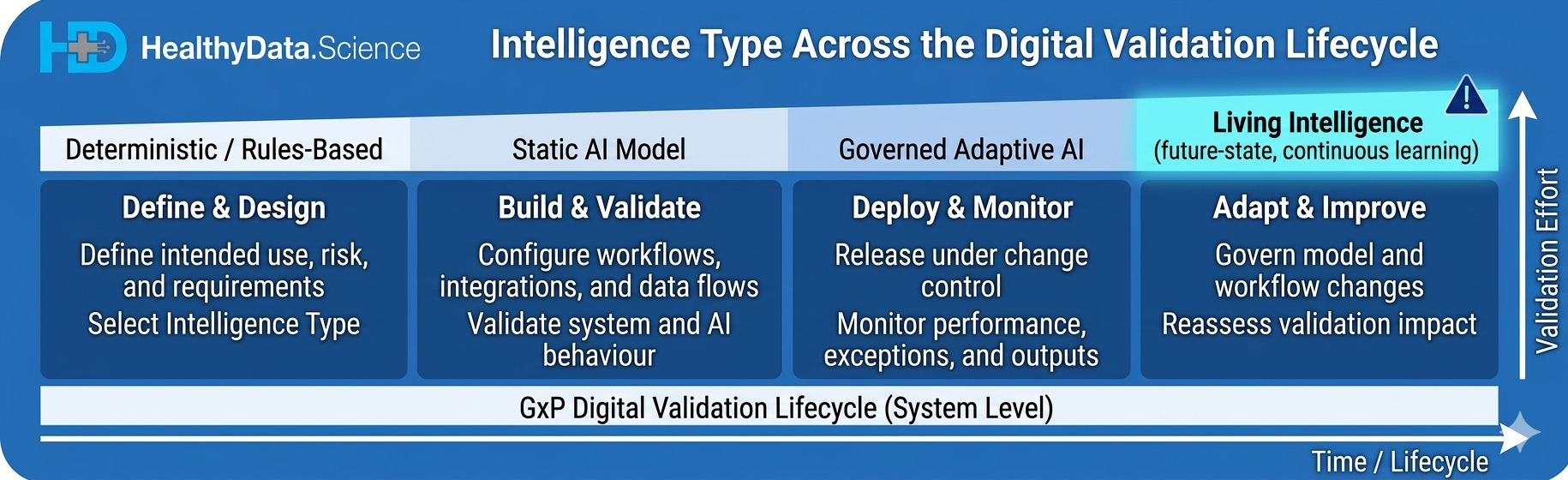

As digital validation tools embed AI (for test authoring, traceability, risk scoring, analytics), validation shifts from one‑time CSV toward lifecycle governance: defining allowed changes, monitoring models in production, and documenting how behaviour can evolve over time.

Intelligence Type gives validation and quality teams a practical handle to align effort with risk, so deterministic tools, static AI, and governed adaptive systems do not all receive the same test depth, change controls, and monitoring expectations.

For now, most validation platforms sit in the governed AI space rather than true Living Intelligence, but adopting Intelligence Type now prepares GxP organisations for more adaptive tools that will demand stronger, continuous validation and QA oversight.

There’s a shift happening in how AI gets used in life sciences, and it’s not just about better models.

Dr Andrée Bates has called it “Living Intelligence” [1][2]. The idea is simple but important: AI is moving from fixed, static tools toward systems that connect to real-time data, sensors, and adaptive decision loops. For validation, QA, and regulated digital operations teams, that distinction isn’t academic. It changes what you need to test, document, monitor, and control. [1][2]

The problem? We’re lumping everything together.

Right now, most pharma AI conversations treat very different technologies as if they’re the same thing [3]. A digital validation platform like Kneat Gx, an AI-enabled assistant like ValGenesis VAL, a periodically retrained quality-risk model, and a continuously adaptive decision system. All four might get labelled “AI” in the same meeting. But they create very different quality and governance obligations. [3][6][7]

That’s where the concept of Intelligence Type becomes genuinely useful. Instead of just asking “Does this tool use AI?”, teams can ask something more operational:

- What kind of intelligence is present?

- How stable is it between releases?

- How much of its behaviour can change after deployment? [3][6]

So what does “Living Intelligence” actually look like in validation?

In practice, it could mean tools that refine risk signals from recurring deviations, recommend evolving test strategies from project histories, or adjust workflow logic based on evidence from prior validation cycles [1][8]. That’s a fundamentally different beast from today’s digital validation platforms, which embed AI within governed, controlled workflows rather than through open-ended autonomous learning. [11][12][13][14]

The current generation of validation tools isn’t really “living” yet. But the direction of travel is clear.

Why this matters procedurally — not just philosophically

Here’s the practical implication your steering committee needs to understand.

A static or locked system can be validated through requirements traceability, functional testing, and release-based change management. That’s familiar territory. A more adaptive system requires something more: data lineage controls, model update governance, drift detection, output review processes, retraining triggers, performance thresholds, and clearly defined boundaries for acceptable change. [6][7][9]

If you apply a traditional CSV mindset to a system whose behaviour can shift in production, your documentation might look complete. But your assurance has gaps. [6][7]

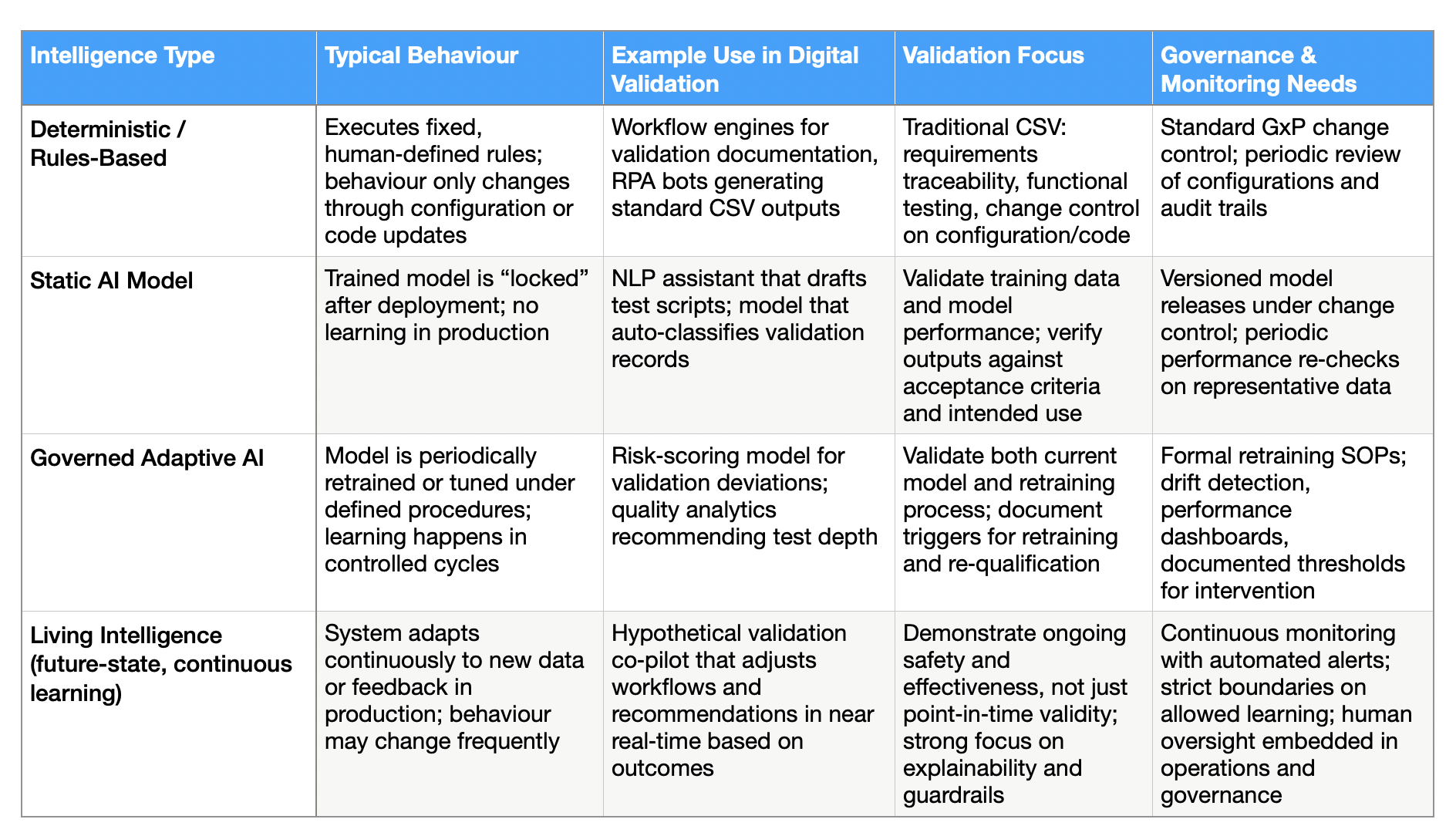

A practical taxonomy (because not all AI is created equal)

Here’s a simple framework that bridges the gap between data science language and QA language: [6][7][9][10]

Table 1: How Intelligence Type Shapes Validation and QA Effort

1. Deterministic / rules-based systems. Behaviour is fixed by human-authored logic. Validate it conventionally.

2. Static AI systems A trained model, deployed in locked form. Changes only through versioned updates. Adds dataset qualification and output verification to your validation burden.

3. Governed adaptive systems. Retraining or performance tuning happens within documented controls and defined change procedures. You need to validate the process of future model changes, not just the current model state.

4. Living Intelligence systems Ongoing learning or real-time adaptation is intrinsic to how the product works. This raises the hardest question: how do you demonstrate continuing control over a system that partly expresses its intelligence through ongoing adaptation? [6][7][10]

Concept inspired by GAMP 5, 2nd Edition AI/ML lifecycle guidance and CAI, “Use of Artificial Intelligence and Machine Learning (AI/ML) in Regulated Environments,” 2025.

Regulators are already thinking this way

The FDA’s work on AI/ML-based Software as a Medical Device recognised that adaptive models challenge conventional oversight, and introduced the concept of a Predetermined Change Control Plan to manage model modifications within a controlled framework [4][5][9]. Even outside formal SaMD, the principle holds: when change is expected, you need documented boundaries for that change, evidence that it remains safe for its intended use, and monitoring that confirms ongoing performance. [4][5][9]

What the leading vendors are actually doing right now

Kneat describes optional AI capabilities within Kneat Gx, covering content generation, review, analysis, and conversational insight, with a strong emphasis on governance, auditability, data integrity, and operation inside a validated platform [11][12][13]. ValGenesis has launched the next generation of VAL within its digital validation stack, positioning it as governed, human-led AI for protocol and trace generation, gap assessment, execution review, evidence analysis, and anomaly identification inside controlled workflows. [14][15][16]

Neither of these is “living” in the strongest sense. They’re governed AI systems embedded in digital validation platforms, designed to preserve human review and procedural control. That’s actually the right answer for where most life sciences organisations are today. [11][14]

What this means for QA teams

QA is ultimately about one thing: evidence that a system remains fit for purpose. In a Living Intelligence scenario, that evidence base shifts. You can’t rely on a one-time validation package. You need continuous performance review, exception trending, human oversight checkpoints, and documented intervention rules [6][7][10]. In other words, your quality system itself may need to become more dynamic to govern dynamic AI responsibly.

The closer a tool moves toward continuous adaptation, the less adequate it becomes to rely on static validation habits alone.

“Living intelligence systems can sense, learn, adapt and evolve through the use of AI, advanced sensors and biotechnology, reshaping how health care understands and responds to the real world.” — Amy Webb, Quantitative futurist, Future Today Institute

The bottom line for your steering committee

Living Intelligence isn’t a futurist buzzword. It’s a prompt to stop treating all AI as interchangeable, and to build validation strategies that reflect how a system’s intelligence actually behaves in production [1][3][8].

Start by classifying what you have. Not all AI tools in your pipeline require the same assurance model. Some are deterministic, some are locked, some are governed-adaptive, and a smaller subset might genuinely be approaching continuous adaptation. Knowing which is which is the foundation of getting this right. [6][7][10]

Discover our curated list of AI tools to see how industry leaders are accelerating timelines, implementing AI solutions in healthcare and gaining a competitive edge. Follow us for more actionable AI insights shaping the future of life sciences and AI in healthcare.

References

[1] Dr. Andrée Bates, “The Future of AI in Pharma Is Living Intelligence,” LinkedIn, Oct. 2025. https://www.linkedin.com/pulse/future-ai-pharma-living-intelligence-dr-andr%C3%A9e-bates-rph3e/

[2] Eularis, “Blog,” 2026. https://eularis.com/blog/

[3] J. Burton, “How to transform pharma with Living Intelligence,” LinkedIn, 2025. https://www.linkedin.com/posts/burtonjeff_the-future-of-ai-is-living-intelligence-activity-7338262663006720000-7oc4

[4] U.S. FDA, “Artificial Intelligence in Software,” 2025. https://www.fda.gov/medical-devices/software-medical-device-samd/artificial-intelligence-software-medical-device

[5] U.S. FDA, “Proposed Regulatory Framework for Modifications to AI/ML-Based SaMD,” Discussion Paper, 2019.

[6] DHC Consulting, “AI System Validation | GxP, EU AI Act & ISPE GAMP AI Guide,” 2026. https://www.dhc-consulting.com/en/gxp-compliance/ai-validation/

[7] EY, “AI validation in pharma: maintaining compliance and trust,” 2025. https://www.ey.com/en_ch/insights/life-sciences/gxp-and-ai-tools-compliance-validation-and-trust-in-pharma

[8] M. Wood, “How Living Intelligence uses AI in pharma,” LinkedIn, Jun. 2025. https://www.linkedin.com/posts/melony-wood-07928872_the-future-of-ai-is-living-intelligence-activity-7338255645076271105-fgKJ

[9] Wilson Sonsini, “Newly Released FDA Action Plan for AI/ML-Based SaMD,” 2021.

[10] IntuitionLabs, “ISPE GAMP AI Guide: Validation Framework for GxP Systems,” 2026. https://intuitionlabs.ai/articles/ispe-gamp-ai-validation-guide-gxp

[11] Kneat, “VALIDATE 2026: Accelerating digital maturity,” May 2026. https://kneat.com/article/validate-2026/

[12] Kneat, “2026 State of Validation Report,” May 2026. https://kneat.com/resource/state-of-validation-report-2026/

[13] Kneat, “Kneat AI,” Mar. 2026. https://kneat.com/kneat-ai/

[14] ValGenesis, “ValGenesis Launches the Next Generation of VAL™,” Apr. 2026. https://www.valgenesis.com/news/valgenesis-launches-the-next-generation-of-val-bringing-governed-ai-to-the-validation-lifecycle

[15] Cleanroom Technology, “ValGenesis unveils AI-driven validation and process platform,” Jul. 2025. https://cleanroomtechnology.com/valgenesis-launches-first-ai-enabled-platform-to-unify

[16] ValGenesis, “ValGenesis to Exhibit Smart GxP™ at 2026 ISPE Europe Annual Conference,” Feb. 2026. https://finance.yahoo.com/news/valgenesis-exhibit-smart-gxp-2026-140000731.html

Author: Stephen

Founder of HealthyData.Science · 20+ years in life sciences compliance & software validation · MSc in Data Science & Artificial Intelligence.